Peter Lobner

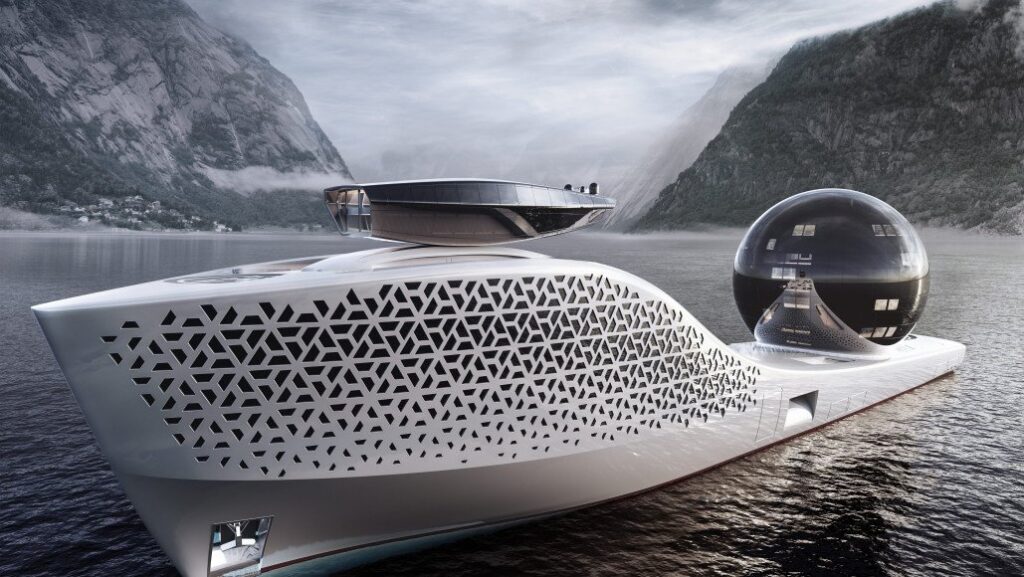

In early April 2021, a flurry of articles described the beautiful, futuristic, nuclear-powered eco-yacht conceived by entrepreneur Aaron Olivera, CEO of Earth 300 (https://earth300.com), and introduced in Singapore as his concept for a signature vessel for conducting environmental research and raising environmental awareness around the world.

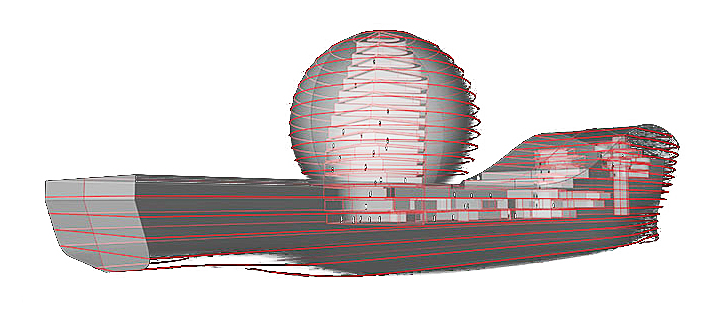

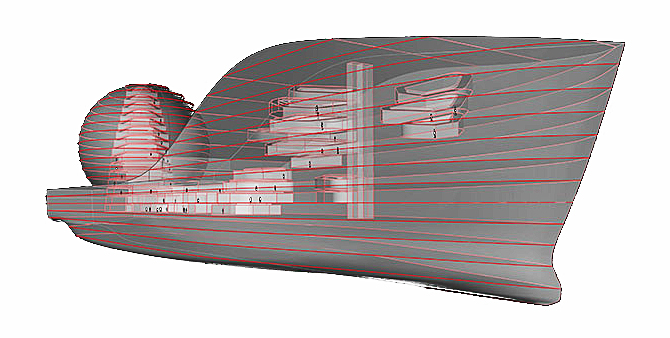

This sleek yacht is almost 300 meters long with a prominent cantilevered observation deck near the bow and a 13-story glass “science sphere” amidships. Olivera describes this vessel as follows:

“Earth 300 it is an extreme technology platform for science, exploration and innovation at sea. Its mission is to ring the ecological alarm on a global scale and combat climate change. Using technology it will quickly scale and deploy solutions to market. Its ultimate ambition is to inspire billions of people to contribute to the preservation of our shared planet, and becoming a sustainable and future worthy civilization.”

The ship’s design was developed by Ivan Salas Jefferson, founder of Iddes Yachts (https://iddesyachts.com), in collaboration with the Polish naval architecture firm NED (https://www.ned-project.eu). Mikal Bøe is the CEO of London-based Core Power (https://corepower.energy), which will supply the next-generation, inherently safe marine molten salt reactor (m-MSR) power plant, using MSR technology developed by the US nuclear company TerraPower (https://www.terrapower.com) that was co-founded by Bill Gates.

Source: Earth 300

The current design has taken six years and $5 million to develop. Earth 300 reports that it is making good progress toward getting an Approval in Principle (AIP) from RINA (formerly Registro Italiano Navale). RINA is a founding member of the International Association of Classification Societies (IACS), which promotes safer and cleaner shipping worldwide. The AIP is a framework used by RINA to review and approve innovative and novel concepts that are not covered by traditional classification prescriptive rules, so that a level of safety in line with the current marine industry practice is provided. The AIP process is a risk-based approach to classification that allows for new designs and novel concepts to be validated with safety equivalencies.

Following the AIP, Earth 300 should be able to request construction quotes from one or more shipyards, likely in Europe and/or South Korea. The ship will be equipped with 22 laboratories for about 160 scientists, cutting-edge artificial intelligence (AI) and robotics systems, and facilities for operating helicopters and submersible and semi-submersible vehicles. Earth 300 executives reportedly estimated that the total construction cost will be between $500 million and $700 million.

Source: Earth 300

Once in operation, the ship is certain to command attention wherever it goes, as a recognizable symbol for environmental protection. This notoriety may be enough to attract wealthy tourists willing to pay $3 million for a 10-day cruise in the 10 luxury suites with private balconies and accommodations for personal staff in a separate set of cabins. That sort of money will buy a lot of selfies, instagrams and some durable bragging rights.

The ship is designed to accommodate 425 people, including the ship’s crew, scientists, and the group of wealthy tourists paying full price. In addition, it has been reported that Olivera envisages inviting groups of other people to travel at a lower price or even for free. For example, 10 suites would be made available to what Olivera calls Very Interesting Persons – people from all walks of life who would bring unique experience or knowledge to the voyage. In addition, some lucky artists, explorers and students may travel for free.

While I’m impressed with the general concept of this ship, I feel that the primary benefit of this grand vessel can’t be to serve as a mobile marine “mixer” for a few very wealthy individuals to associate with scientists, some elite Very Interesting Persons, and a patchwork of others interested in environmental protection.

Like the 3 AM infomercial says, “But wait, there’s more.” Research performed aboard the ship would be “open source” and shared with other research efforts around the world. That’s great, but more information is needed on the meaningful research programs that would be conducted on the Earth 300 vessel in segments that match the schedule and route of what is essentially a cruise ship. It seems that a much less expensive dedicated vessel could accomplish the same research while not serving as an environmental sideshow on a cruise ship.

With the ship scheduled to launch in 2025, the vessel itself will be ready many years before the planned marine molten salt reactors (m-MSRs) have been developed and approved by the appropriate nuclear and marine regulatory agencies. Therefore, it is likely that the vessel will be designed to operate initially with a conventional marine power plant running on synthetic “renewable” fuels. This isn’t exactly a big step in the right direction for helping to reduce the carbon emissions from worldwide commercial marine transportation.

Like the 3 AM infomercial says, “But wait, there’s more,” or at least, there should be.

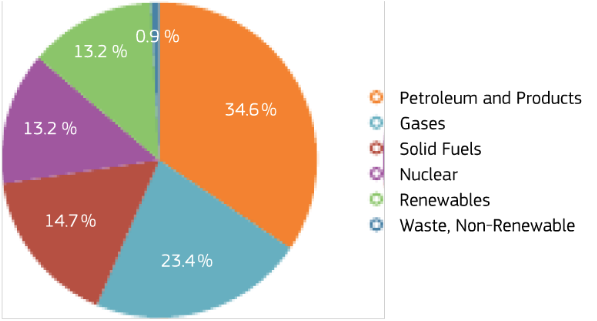

Core Power, the developer of the m-MSR planned for the Earth 300 vessel, is designing their 15 MWe inherently safe micro-reactor system as a zero-carbon replacement power source for the fossil-fueled power plants in many commercial marine vessels. On their website, Core Power presents the following business case:

“Over the next few decades as many as 60,000 ships must transition from combustion of fossil fuels to zero-emission propulsion. The UN’s maritime agency IMO has mandated with unanimous approval from 197 countries that shipping must reduce emissions by 50% of the 2008 total, before 2050. This means an actual emission reduction of almost 90%, by 2050. MSR technology being developed by the consortium could achieve that goal, by powering production of green sustainable fuels for smaller ships and providing onboard electric power for large ships, with zero emissions as standard.”

90 MWe (about 120,000 hp). Source: Core Power

I think it is actually fortuitous that the Earth 300 vessel will start its life as a fossil-fueled vessel. From this starting point, Earth 300 will be at the vanguard of a new generation of inherently safe marine nuclear power system development and deployment.

Converting the Earth 300 vessel to nuclear power will move the discussions on commercial marine nuclear power from the academic domain, where it has languished for many decades, to the commercial marine nuclear safety regulatory domain, which has been inactive for decades and likely is not prepared for this new applicant. By being first in line, Earth 300 and Core Power take on substantial licensing risk that certainly will add to the time and cost of their nuclear licensing efforts. However, they are in unique positions as a reactor supplier and a vessel operator to help shape the licensing dialogue pertaining to the use of inherently safe micro-reactors in marine vessels, and the worldwide operation of vessels using such reactors.

The experience gained from converting Earth 300 from fossil to nuclear power will de-risk the nuclear power conversion process for the entire marine transportation industry.

- Regulatory precedents will have been established for the reactor designer and the vessel operator.

- The conversion experience will yield many metrics and lessons learned that will help in planning and executing subsequent conversions.

- Ports around the world will be on notice that commercial nuclear-powered vessels once again are a reality and appropriate port-specific nuclear safety plans may be required

In this role alone, Earth 300 will create a path for the commercial marine transportation industry to meet the IMO’s 2050 emission goal. This would be a truly substantive accomplishment that will far outweigh the ship’s public relations accomplishments as a symbol of environmental protection and showcase for environmental research.

I hope Aaron Olivera gets the support he needs to build the Earth 300 ship and subsequently convert it to nuclear power. At one level, the ship is a grand gesture. On another level, the nuclear powered ship is a substantive step toward a future with zero-carbon commercial marine transportation.

For more information

- Rachel Cormack, “This Insane 984-Foot Explorer Gigayacht Has a Giant ‘Science Sphere’ for Research,” Robb Report, 8 April 2021: https://robbreport.com/motors/marine/explorer-yacht-longer-than-titanic-sports-science-sphere-1234606332/

- Jenna Mehdi, “300m Floating Research Lab EARTH 300 Unveiled,” SuperYachts, 9 April 2021: https://www.superyachts.com/news/story/300m-floating-research-lab-earth-300-unveiled-15367/

- Adam Majendie, “Atomic Superyacht to Offer $3 Million Eco-Tours With Scientists,” Bloomberg, 10 April 2021: https://www.bloomberg.com/news/articles/2021-04-10/atomic-superyacht-to-offer-3-million-eco-tours-with-scientists

- “Approval in principle of innovative projects,” RINA: https://www.rina.org/en/approval-in-principle-of-innovative-projects

- “Safer and Cleaner Shipping,” International Association of Classification Societies (IACS): https://www.iacs.org.uk